An App That Matters: Getting Great Results with A/B Testing

McKinsey found that a one-point improvement on a ten-point scale in customer satisfaction translates, at least, to a three-percentage-point increase in revenue.

The right design strategies can boost your app’s usability, customer satisfaction, and your company’s ROI. An A/B test is one of the best ways to test, identify, and implement the ideal strategies for your mobile app’s growth.

As an expert mobile app development team, we’ve helped many companies run UX evaluations to understand what’s standing in the way of their customers converting.

Table of contents

Where does your mobile app currently stand?

Before you conduct an A/B test, it’s important to have a sense of where your product currently stands. A UI/UX audit report measures the current usability of your mobile app’s UI, which will provide you with a sense of where improvements might be made.

In our previous post, we examined how to assemble a highly efficient and motivated team to create a remarkable mobile application. Now, let’s take a closer look at how to build useful features with your newly constructed team by using A/B testing.

What is Mobile App A/B Testing?

Mobile app A/B testing, also known as split testing, refers to the process of comparing two versions of a similar product. During the test, each variation is shown to two different segments of visitors/users. The winning variation is the one that provides the optimal desired outcome.

The Importance of A/B Testing for UX Mobile App Development

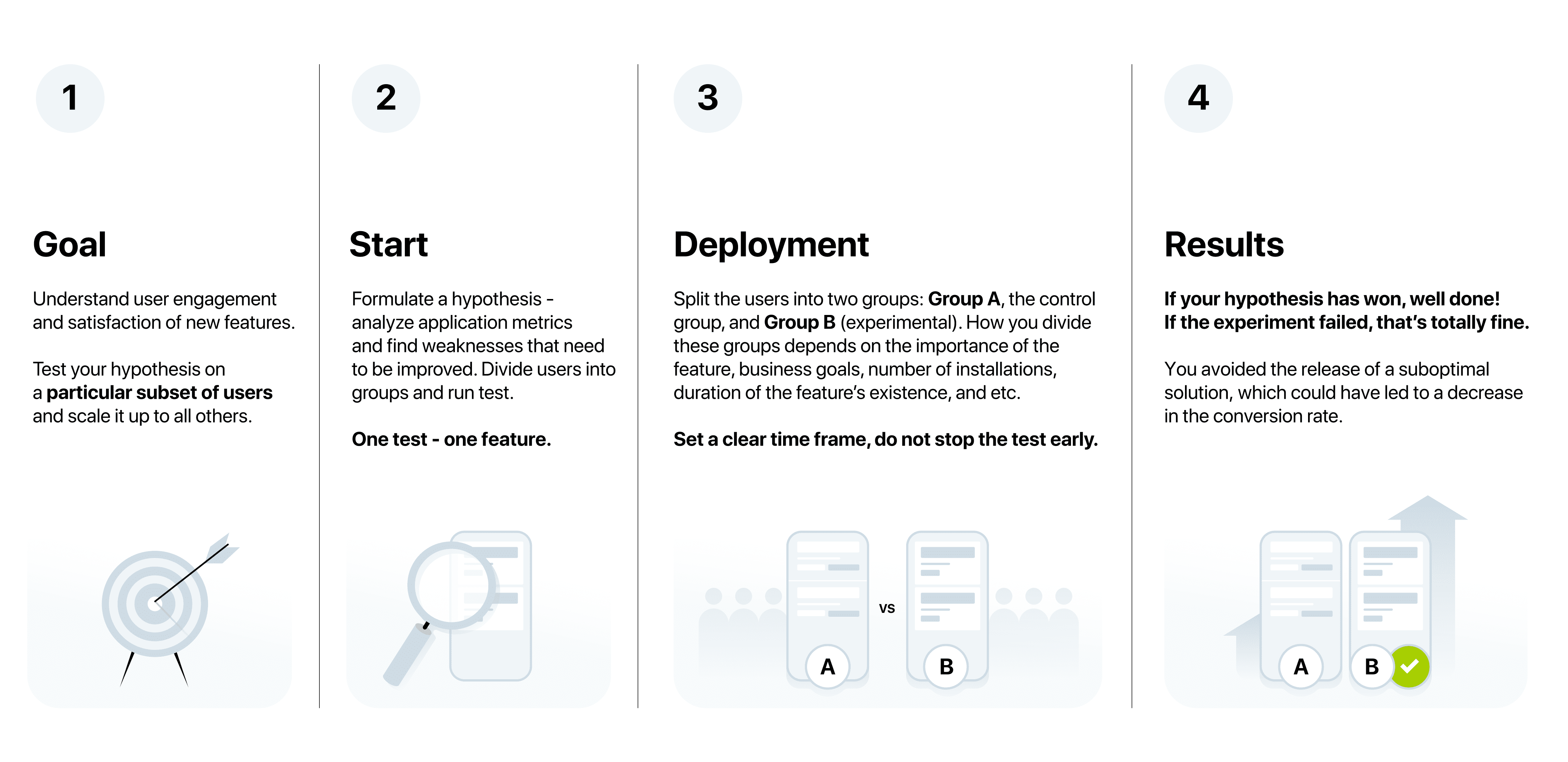

Mobile App A/B testing is one of the ways of usability optimization for mobile applications. It’s a fantastic way to understand user engagement and satisfaction with new features. The key idea here is to test your hypothesis on a particular subset of users and, if your hypothesis proves to be true, scale it up to all others. In that sense, future releases will include those features that have already been “approved” by users.

When A/B testing for mobile apps, we must first formulate a hypothesis that we want to check and identify the result of. To do so, marketers and analysts use mobile app A/B testing tools to analyze application metrics and find weaknesses that need to be improved. This results in a UX audit report. From there, users are divided into groups and the A/B test is run, with each group seeing only one implementation of the feature on the mobile app. We then collect the results and adjust accordingly.

The Benefits of A/B Testing for UX and UI of Mobile App

A quality user experience (UX) and user interface (UI) for a mobile application are essential. Done properly optimizing the UX/UI through mobile app A/B testing can:

- boosts sales,

- increase retention, and

- improve customer satisfaction.

A/B testing helps determine what elements of your mobile app are and aren’t appealing to your users. Without mobile app A/B testing it can be difficult to know what your customers want and will respond best to. When approaching mobile app A/B testing you might look at:

- where to put your CTA,

- the ideal color palette,

- button placement,

- copy,

- image placement,

- screen layouts,

- icons, or

- other visual design elements.

The benefits of mobile app A/B testing go beyond helping prove or disprove a hypothesis. Benefits of A/B testing for mobile apps include:

- Cost benefits. Mobile app A/B testing with a focus on UI and UX design is a cost-effective means of improving your app and your conversions. You don’t need to hire a huge external research or consulting team to test which features your customers will respond best to. By checking your UX audit reports for each variation of the A/B test you will be able to identify the best way of allocating your resources.

- New product rollouts. A/B tests for mobile apps help reduce the risks associated with the rollout of new features. If developers accidentally release a feature with bugs, mobile app A/B testing data will alert them that something is wrong (or if the user’s simply don’t like it) so they can roll the feature back quickly. This enables organizations to have faster release cycles.

- Develop a clearer sense of customer behavior. A/B tests allow you to explore how different segments of your user base respond to variations of the features you’ve chosen to test. The results will reveal what drives the user behavior of each segment.

The ultimate purpose is to elevate and optimize your users’ experience with your app.

MOBILE APP A/B TESTING IN ACTION

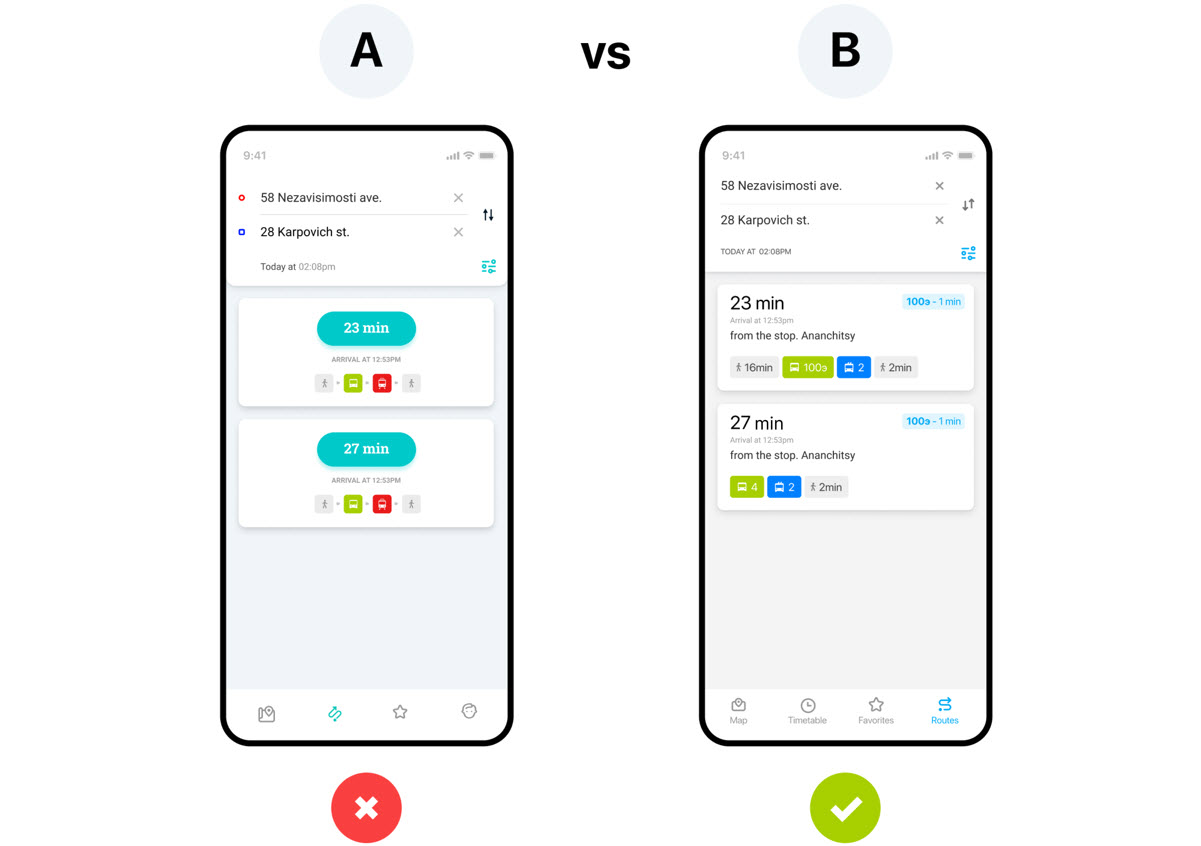

On our transportation app, we had a pop-up window that showed the vehicles stopping at a bus stop. By clicking on the route number a user could go further into the application and see the selected vehicle’s schedule. Our analysis, and subsequent UX audit report, revealed that users did not make this transition very often, although the schedule window was one of the most frequently visited.

Our assumption was that users perceived the plates with route numbers to be untapable. To solve this, informed by our UI/UX audit report, our designers created new plate number tags that we thought users would be more likely to tap on. However, we didn’t want to rely merely on our assumptions. We needed to test if the new design worked.

To do this we implemented an A/B test for the mobile app we were building.

We split the users into two groups: Group A, the control group, where users will not be exposed to any of the options being tested, and Group B (experimental), which will consist of users who will test our hypothesis.

When conducting mobile app A/B testing, how we divide these groups, and to what percentage, can depend on the importance of the feature, business goals, number of installations, duration of the feature’s existence, and a wide range of other criteria.

For this case, we placed 90% of users in Group A and 10% into Group B. While you can use more than two groups, to check a larger number of implementations, we recommend always using a control group for your mobile A/B testing that is sizeable enough to act as a benchmark against the other features.

The result of our mobile A/B test

After running the campaign for 30 days, we observed the following results: the variation outperformed the control with a projected 25% increase in the vehicle’s schedule usage.

Setting a clear time frame will help estimate the differences between the metrics on Group A and Group B. It is important to stick to this time frame, and you should not stop the test early, even if during the initial phases, one group is confidently leading. The waiting is the hardest part.

Once waiting is done, if your hypothesis has won, well done. The goal of mobile app A/B testing has been achieved and you can release a new feature for all users. If the experiment failed, that’s totally fine. Your team did their best. Plus, you avoided the release of a suboptimal solution, which could’ve led to a decreased conversion rate. If your hypothesis fails, you’ll need to rerun the mobile app A/B test with other options. Either way, you’ve gained new insights and information about the application that can be used to further optimize your mobile app.

How to Choose the Right Mobile App A/B Testing Tools

The right A/B testing tools for your mobile app depend on what you’re looking to test and measure. Some questions to ask yourself when exploring your options are:

- How quickly do you want to get started?

- What is the current bandwidth of your engineering team?

- What is your project timeline?

- Do you want to handle this internally or through a skilled outside vendor?

- Does your team have the knowledge to run complex tests?

- How much expertise does your team have with statistical analysis?

- What sort of support do you want to have while working on A/B tests?

- What key features are you looking for/what do you want to test?

Choosing the right A/B testing tools for mobile apps will set you up for success. They will allow you to UX audit reports that will tell you where you stand before you start testing and each step of the way.

We pride ourselves in selecting the right mobile A/B testing tools that meet the needs of each client we work with through our five steps testing process:

- Requirements development. We analyze your business and industry and elicit key needs, and set goals and quality metrics accordingly.

- QA strategy. We specify test and document types required for the project, develop project metrics and communication strategy, and provide proof of concept.

- Starting the testing process. We select tools and set up a testing environment, form the project team.

- Implementation. We carry out testing according to the developed schedule.

- Data collecting and reporting. When ready, we gather statistics and draw up test execution reports.

It’s All Connected: A/B Testing in App UX Design

The results of a UI/UX audit report are only useful if you’re able to successfully test, implement, and iterate on them. To do this, you need a great team. A great team will be able to take these insights and apply them through multiple iterations of A/B tests to discover the right way forward.

A/B testing for your mobile app is an inexpensive method to:

- boost sales,

- develop an understanding of user engagement,

- improve the user experience,

- streamline feature rollout, and

- learn consumer behavior.

It will help increase your ROI because you will learn how to give your users exactly what they want

For more information on building fantastic applications. Feel free to reach out with any questions you have. We’re always happy to help. Stay tuned for our next blog posts on mobile app development.

YOU MAY ALSO BE INTERESTED IN

- Ten Advanced Checks You Need to Get a High-Quality and User-Friendly Mobile App

- QA or not QA? Why Quality Assurance is so Important for the Success of Your Mobile Application

- A Story of Success: Mobile App Development for a Major Client in Logistics

- What You Need To Develop a High Quality Transportation App

- Building a Terrific Team to Create a Mobile Application

- Flutter vs. Native: an Examination of Cross-Platform Mobile Development

- Dealing with Map-related Challenges and User Onboarding Experience in the Vehicle Tracking App

- Creating Your Mobile Business App: Costs, Team, Outsourcing