Most Popular Options for Mainframe DevOps

There is no “perfect” tool that will meet everyone’s needs.

Every tool was designed to serve a different type of team.

Every tool was designed to operate within a specific context.

And every tool was designed to overcome a specific set of challenges, while capturing a specific set of opportunities.

In short: the tool that delivers miracles for “Person A” might fail to deliver a single meaningful outcome for “Person B”.

To identify the “perfect” tools that meet your needs, you must test multiple tools, identify the pros and cons of each, and ultimately pick those options that seem to best align to your unique requirements.

We faced this reality with a recent client.

For the past 18+ months, we have moved this client through a massive DevOps transformation of their mainframe application development capability.

As part of this transformation, we tested, analyzed, and upgraded a few of the foundational mainframe DevOps tools their teams leverage.

In this article, we will dive deep into this evaluation process. We will:

- Outline a framework to evaluate your existing tooling, to see if it might be sufficient to meet your requirements.

- Share our in-depth analysis of the specific DevOps tools for mainframe we evaluated and either kept, or upgraded, within this specific client engagement.

- Provide our perspective on when each of these mainframe DevOps tools might be appropriate to meet your requirements.

- Offer a few tips for how to handle the change management process if you choose to upgrade one or more of your existing mainframe application development tools.

Let’s dive in.

This is Part 6 of a longer series that will tell the full story of our client’s transformation.

- Bringing DevOps to Mainframe Application Development: A Real-World Story

- The Key to Large-Scale Mainframe DevOps Transformation: Building the Right Dedicated Team

- Choosing the Right First Steps in DevOps Mainframe Transformation

- Improving the Initial Mainframe Automation and Creating a “Two Button Push” Process

- How to Select and Implement the Right Tools for Mainframe DevOps Transformation

- Picking the Right Tools: A Deep Dive Into the Most Popular Options for Mainframe DevOps

- Completing the DevOps Pipeline for Mainframe: Designing and Implementing Test Automation

- Making Change Stick: How to Get Mainframe Teams Onboard with New Strategies, Processes, and Tools

Table of contents

The Story So Far…

This is Part 6 of a longer series that will tell the full story of our client’s transformation.

This client is a multi-billion-dollar technology company that employs hundreds of thousands of people, and uses a central mainframe to drive many of their critical actions.

When they came to us, they were following outdated, over 20 year old mainframe application development processes. They partnered with us to transform their mainframe application development process, and to bring a modern DevOps approach to this critical area of their business.

So far, we have shared a top-level overview of this project, discussed the importance of building dedicated teams for mainframe DevOps, explained the initial automation we created for this client, and showed how we improved that initial automation over time.

In our previous article, we shared a top-level, strategic perspective on our mainframe DevOps tool selection process, and how we changed a few of this client’s foundational tools. You can read this article here.

In this article, we will provide our detailed analysis on each of these mainframe application development tools, to better help you make your own decision about which of these tools might be useful for mainframe DevOps, and which you might do best to either upgrade to or ignore.

Before we begin, let’s revisit the most important step in the tool upgrade process: evaluating your current tooling, and determining whether you really need to make any upgrades at all.

First Thing’s First: Can Your Existing Tools Do the Job?

Sometimes, you really do need to upgrade your tooling.

But often, the “perfect” tool is the tool you are already using.

Think about it.

In most cases, the tool you are already using:

- Meets the baseline technical requirements of the job you are deploying it for, and which you are considering a new tool to deliver on.

- Does not need to be implemented as it’s already in your environment and is already well-known by your internal teams.

- Has already been ok’d by management, integrated with existing systems, and folded into everyone’s day-to-day life.

On the contrary, implementing any new tool will be disruptive, expensive and time-consuming… so you better make sure that your existing tools are not viable options and that the new tool is 100% necessary before you face the headache of upgrading your toolkit.

To determine if an existing tool might be sufficient for whatever improvements you are looking to drive, ask yourself a few questions:

- Do my existing mainframe application development tools allow me to create smooth, simple solutions?

- Do my existing mainframe application development tools make it easy to integrate new features?

- How much of my existing tool’s functionality am I really using?

- What new features or functions do I seek by upgrading this tool? Are they available within my existing tool, but I’m just not using them?

- What are the key functions that I actually use my existing mainframe application development tool for? Do other tools provide those same features, but either better or with less bloat?

- How well does my team really know this tool’s functionality?

- Is this tool popular in the development community? Is it easy to find specialists who are familiar with it, and happy to work with it?

By running your existing mainframe application development tools through these questions, you will have a better sense of whether or not your existing tools really need to be replaced, or if you just need to use them in a more effective manner.

But if you do run your tools through these questions, and determine that you are best served by adopting new systems, then read on.

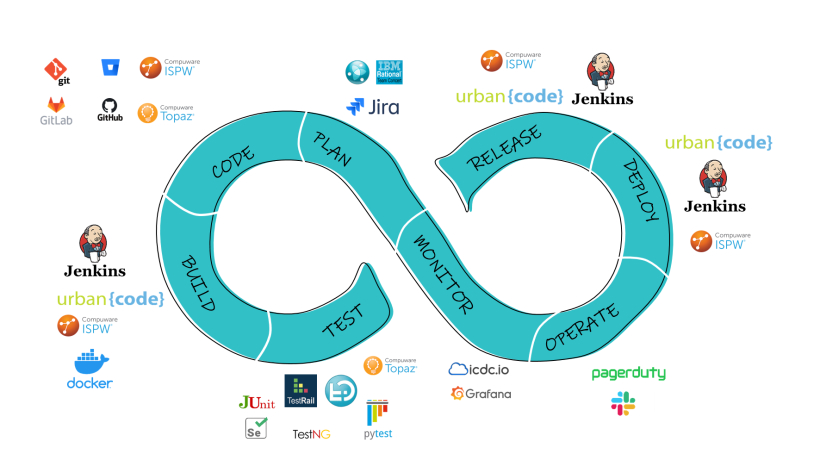

We will now present our detailed analysis of six of the most popular tools for mainframe application development currently on the market:

- IBM® UrbanCode® Deploy (UCD)

- Jenkins®

- IBM® Rational® Team Concert (RTC)

- Jira®

- IBM® Rational® Quality Manager (RQM)

- TestRail®

This tool’s main advantage is the face it integrates with mainframes using the z/OS® plugin. This allows UCD to run TSO commands, to store and deploy MVS™ datasets, to submit JCL jobs, and many other core functions of mainframe application development. It also supports other platforms—like Linux®, Windows®, and the Cloud—allowing you to use different machines in one pipeline, and a very wide deployment approach.

UCD is also relatively user-friendly, allowing users to configure processes using a drag & drop mechanism, and not scripting (unlike other popular CI/CD tools, such as Jenkins).

When we use UCD, we like to use Codestation. This is a special repository that is located on the UCD server that stores all deployed artifacts. It works with both mainframe and distributed files, and allows you to track changes and repeat deployments— making up for the fact that not all mainframe projects have a version control system in place.

Building a DevOps pipeline using UCD requires the integration of several tools, and each phase of development has several alternatives. UCD also has a limited set of plug-ins for third-party software. In this particular client instance, that forced us to write all scripts for TestRail integration from scratch, whereas other tools do not require custom development.

UCD was a great choice for this particular client project, and we believe it is a good choice to drive pipeline development for many mainframe DevOps capabilities. There are potential pitfalls to be wary of (such as ensuring that all UCD agents are installed and configured correctly, and that UCD objects are properly configured to suit the unique needs of your server and application infrastructure), but overall UCD is a robust and versatile platform.

Jenkins gives you a free and open-source automation server. It focuses on automating the parts of mainframe application development that revolve around building, testing, and deploying code through a CI/CD pipeline.

Because Jenkins is so popular, the tool is very widely known by developers, and most other tools used in code development offer plug-ins for Jenkins (especially compared to UCD).

In this specific instance, Jenkins was not the best option for a few reasons.

First, this client was already using UCD, and everyone on their teams knew how to use that tool.

Second, Jenkins does not have a plugin for z/OS, nor did it have integrations with this client’s mainframe.

Third, Jenkins forces you to construct a pipeline using code scripts, while UCD has a graphic interface. The fact you can build pipelines in UCD with simple, intuitive drag-and-drop elements ended up streamlining a lot of our work.

UCD was a great choice for this particular client project, and we believe it is a good choice to drive pipeline development for many mainframe DevOps capabilities. There are potential pitfalls to be wary of (such as ensuring that all UCD agents are installed and configured correctly, and that UCD objects are properly configured to suit the unique needs of your server and application infrastructure), but overall UCD is a robust and versatile platform.

Within this client’s projects, RTC’s main advantage was the fact it had been used for several years before we came onboard, and many of the client’s end-users were very familiar with it. While these teams were using RTC in a relatively limited manner (to track code changes only), the tool does have a huge range of functionality.

However, RTC does have some broadly applicable benefits. The tool offers a wide range of functionality, and can be used for task recording, project planning, code building, and it can be integrated with git to act as a version control system.

RTC also belongs to the Rational family, giving you easy integrations with any other Rational tools you might be using.

This tool doesn’t work as well with UCD as you might hope. While UCD does have an RTC plug-in, there are a lot of missing integrations. Within this project RTC was not able to read and update custom fields, and it cannot attach files to a record. As a result, we had to add custom integration code to make it all work.

Developing these custom integration codes was challenging. RTC can only receive requests, and send responses, in an OSLC format. This makes the whole process more challenging than it needs to be. And to make the whole thing more frustrating, RTC has poorly documented interactions with its REST API.

Overall, we found it challenging to develop all of the integrations that RTC needed to work properly within this project (though once we finished developing these scripts, the integrations were stable, and we experienced no significant problems). We believe that RTC could be a viable tool if you were to use its full range of functionality, but ultimately it was not the right choice for how this client was using it.

Ultimately, we typically say that RTC is only really the best option if you already have a complete system built out with it, and if that system seems to be working well for you. If you do, then it will be too much of a hassle to rebuild everything with an alternative tool.

Jira is one of the most popular team collaboration tools available, and with good reason. Jira has a well-documented REST API, and an efficient plug-in for UCD that let us develop the necessary integration scripts very quickly (it only took us about a week to complete all required scripts). Jira also allowed us to create many informative dashboards for management, and easily visualize our DevOps transformation’s deployment and test statistics.

Jira is also very useful for modernizing mainframe activities. Most modern mainframe companies are doing their best to move mainframe closer to the “distributed” world, and Jira can play a large, effective, strategic role in this movement.

We ran into one main challenge with Jira on this project, but it was not necessarily the tool’s fault. This client used one single Jira configuration set-up for all of the teams in their organization, including teams we didn’t work with, and this caused issues when the configuration was changed by other teams, and it limited some of the customizations we would have preferred to have produced.

We highly recommend Jira. While we were not involved in the decision to change from RTC to Jira in this particular client instance, we would have made this recommendation on our own if they hadn’t done so themselves.

This tool has good functionality for executing test scripts that can be used on a range of machines. RQM implements these scripts through its command line adapter, which can be installed on either Windows or Linux servers, and which then executes these scripts onto the machine from its web interface.

RQM also has a UCD plug-in that allows it to trigger test suite execution directly from UCD. This allowed us to build out our test approach within our pipeline in a very short amount of time.

Like RTC, RQM is overloaded with many features that were not being used by this client, nor will they necessarily be used by most mainframe DevOps capabilities. This problem was even worse for RQM than it was for RTC. In RQM objects have many dependencies between each other, which complicated critical functions and slowed down its implementation. Further, RQM doesn’t have robust, accessible documentation that made each of these challenges even more aggravating to resolve.

We did find the client’s existing RQM adapter very helpful when we were first building out their test automation process. RQM allowed us to concentrate on designing test automations approaches instead of working on technical solutions for script execution. We would recommend RQM if you have a complicated test management structure, and you want to automate testing with custom test execution scripts instead of a specialized framework.

TestRail has a number of advantages over most other test management tools.

First, it has a simple, transparent structure, through which we were able to understand all test automation dependencies in just two hours. By contrast, it was challenging to see connections within RQM, which made it painful and at time impossible to diagnose and fix automation failures.

Second, TestRail allows you to add user defined scripts. We used this feature to replace a few missing elements of TestRail with custom JavaScript. For example, we created a GUI “button” to execute test scripts on a remote server, and we added nice features such as “validate test case” to test if input data formats were correct prior to deployment and execution.

Finally, TestRail has a well-documented REST API. This is one of the main selling points for any tool in a mainframe DevOps toolset, and one of the first things we check with any new tool we are evaluating.

Unfortunately, TestRail does not have a UCD plug-in, and we had to create a custom script based on its REST API to compensate. TestRail also did not have the same convenient adapter that RQM had, and we had to again build a custom script based on its REST API. Finally, TestRail was not able to perfectly replicate the RQM functionality we had already created, and we were forced to build workarounds that were not always convenient for users.

TestRail is best if you require a simple structure for your test management, and if you are able and willing to develop some of your own custom execution tools, or to use a third-party framework for testing.

Making the Change: Our Process for Upgrading to a New Tool

At this point, you should know whether one or more of these mainframe DevOps tools might offer a meaningful upgrade to one or more of your tools.

If you are considering a change, just know this… upgrading an existing tool—even to a demonstrably superior alternative—will always be a bit of a headache.

There will be many stakeholders involved.

There will be resistance from users and executives.

There will be many technical challenges to overcome.

And there will be a stretch of time before you see the benefits of the new tool.

These challenges are, by and large, unavoidable. But there are ways to minimize the headaches and maximize the chances of successfully implementing a new DevOps tool for mainframe into your organization. Here is the process that we typically follow with clients:

We provide more detail on each of these steps, as well as a real-world example of how we followed them with our client, in our previous article in this series.

Overall, it often just takes time to overcome all resistance, and to bring everyone on board with the new mainframe DevOps tool you chose. But, as long as you selected your new tool properly, then given enough time your users will get used to the new tool and come to see why the switch was worth it, and management will begin to see the tangible benefits you promised them.

At this point, the tool will become as normal, natural, and assumed in your mainframe application development pipeline as your previous tools.

Step One: Business Case

Provide management with a technical analysis that explains why your existing tool will not be sufficient over the long term.

Step Two: List Development

Generate a list of viable alternatives that will fit into your systems, processes, budgetary constraints, and security requirements.

Step Three: Management Review

Give management the time and resources to perform their own evaluation of your list, and to select which tools to trial.

Step Four: Tool Trials

Take the tools that management approved for testing, and work with them in a test environment that replicates your operating environment.

Step Five: Tool Recommendation

Provide management with the results of your tool trials, and with your ultimate recommendation for which option you feel performed best.

Step Six: Tool Integration

Integrate the new tool (that management ultimately selected) into your existing pipeline, replicating as many prior processes as possible.

Step Seven: Documentation and Education

Create instructions for how to operate the new tool in your environment, provide hands-on training, and make sure everyone can use the new tool.

Next Steps: Our Test Automation Process, In Depth

In future articles, we will return to the subject of tooling, and discuss more of the tools that we used for this client’s mainframe DevOps transformation.

But within our next article, we will return to the more visible steps in this project. We will perform a deep dive into the test automation process that we developed for this client.

If you would like to learn more about this project without waiting, then contact us today and we will talk you through the elements of driving a DevOps transformation for mainframe that are most relevant to your unique context.

You may also be interested in

- Integrating Power BI into E-Commerce: How to Succeed in Rapidly Developing Markets

- The Easy Way to Bring DevOps to Mainframe Software Development

- Making Change Stick: How to Get Mainframe Teams Onboard with New Strategies, Processes, and Tools

- Completing the DevOps Pipeline for Mainframe: Designing and Implementing Test Automation